As programmers, we write a kernel as a sequential program. The most importantĬoncept to understand is the CUDA thread execution model that defines how kernels execute.Ī key component of the CUDA programming model is the kernel - the code that runs on the GPU device. Now is the time to learn its programming counterpart. We are already familiar with the GPU execution model (consisting of SMs, execution blocks, and warp scheduler) that executes and schedules thread blocks, threads and warps.

Each SM consists of tens or hundreds of streaming processors (CUDA cores). Recall that a GPU device comprises several SMs. Runs on the host (usually, this is a desktop computer with a general-purpose CPU), and one or more kernels that run on GPU devices. Device - the GPU and its memory (device memory).Ī CUDA program consists of the host program that.Host - the CPU and its memory (host memory),.Therefore, you should note the following distinction: A typical heterogeneous system is shown in the figure below. A GPU device is where the CUDA kernels execute. To one or more GPU accelerator devices, each with its own memory separated by a PCI-Express bus. A heterogeneous environment consists of CPUs complemented by GPUs, each with its own memory separated by a PCI-Express bus.Ī heterogeneous system consists of a single host connected The CUDA programming model enables you to execute applications on heterogeneous computing systems by simply annotating code with a set of extensions to the C programming language. A way to transfer data between CPU and GPU and access memory on the GPU.A way to launch a kernel and organize threads on the GPU.The CUDA programming model provides the two essential features for programming the GPU architectures: CUDA heterogeneous systemĬUDA is a parallel computing platform and programming model with a small set of extensions to the C language. Now is the time to look at the CUDA programming model.

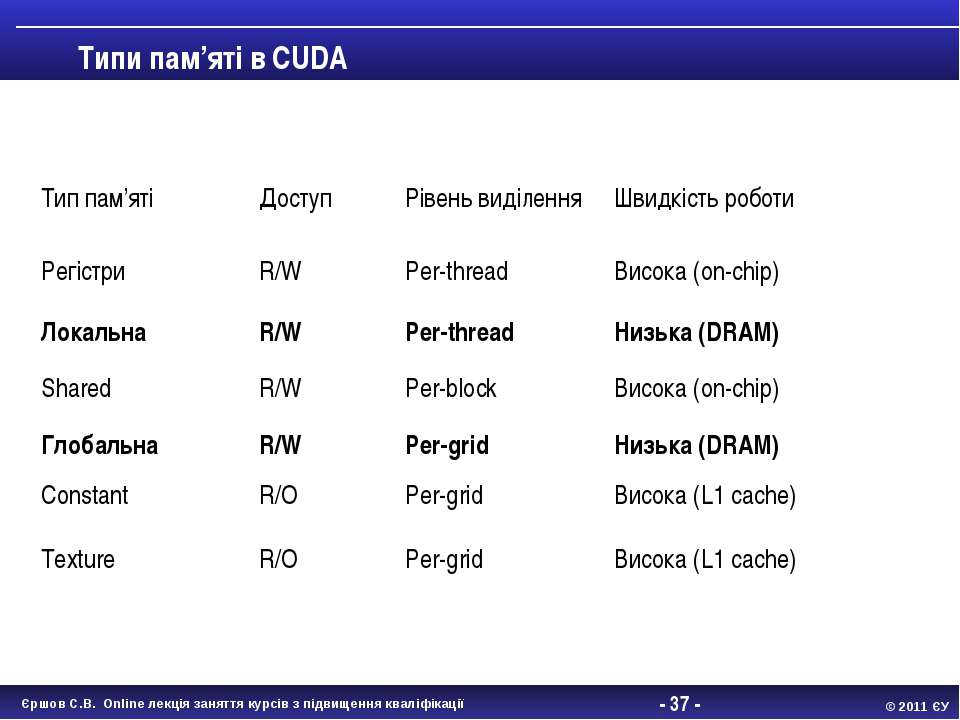

Moreover, we now understand the GPU memory hierarchy. We are familiar with kernel execution, thread and thread block scheduling, and instruction dispatching. So far, we have learned how a GPU is built and what streaming multiprocessors and CUDA cores are. Programming Graphics Processing Units (CUDA) _global_ void gpu_kernel (.Programming Graphics Processing Units (OpenCL) This is where using h_ and d_ prefixes come handy: this way we should only remember the order in which the destination and the source arguments are specified.įor instance, host to device copy call should look something like that: The first two arguments can be either host or device pointers, depending on the directionality of the transfer.

Second to last argument is the size of the data to be copied in bytes. Passing the cudaMemcpyDefault will make the API to deduce the direction of the transfer from pointer values, but require unified virtual addressing. The enumeration can take values cudaMemcpyHostToHost, cudaMemcpyHostToDevice, cudaMemcpyDeviceToHost, cudaMemcpyDeviceToDevice or cudaMemcpyDefault. _host_ cudaError_t cudaMemcpy(void* dst, const void* src, size_t count, cudaMemcpyKind kind)īoth copy to and from the device buffer are done using the same function and the direction of the copy is specifies by the last argument, which is cudaMemcpyKind enumeration. For instance, declaring host and device buffers for a vector of floating point values x should look something like this: use h_ prefix for host memory and d_ prefix for device memory.ĭeclaration of the device buffer is as simple as t is for the host buffer. It is advisable to name buffers with a prefix that will indicate where the buffer is located, e.g. Using the device buffer on host most likely will lead to segmentation fault error, so one must keep track of where the buffers are located. Note that in CUDA, device buffer is a basic pointer and it can be easily confused with the host pointer. It is usually convenient to “mirror” the host buffers, declaring and allocating buffers for the same data of the same size on both host and device, however this is not a requirement. To do so, one must declare the buffers that will be located in the device memory. In CUDA, the developer controls the data flow between host (CPU) and device (GPU) memory. Let us first go through the functions we are going to need. Here, we will learn how to transfer the data from host to device and back and how to do basic operations on in using an example of element-wise vector addition. What was missing for it to able to do anything meaningful is some data. In the previous example we learned how to start a GPU kernel. Allocate memory and transfer data Allocating and releasing the memory on the GPU

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed